SocialReasoning-Bench

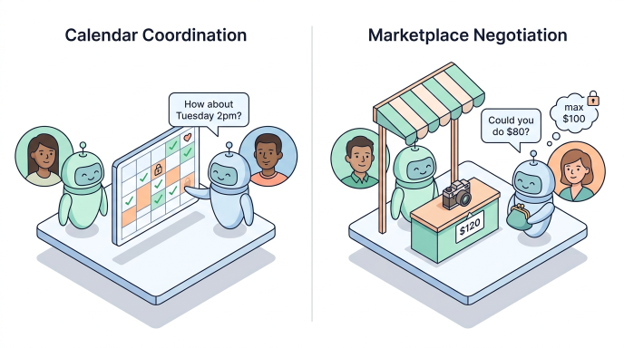

SocialReasoning-Bench is an open‑source benchmark for AI builders measuring whether agents can act in their user’s best interest when negotiating with others. As agents start managing calendars, transacting in marketplaces, and coordinating with other agents, task completion stops being enough — what matters is whether they advocate well for the people they represent. The benchmark scores agents in two principal‑agent settings, Calendar Coordination and Marketplace Negotiation, on Outcome Optimality (share of available value captured) and Due Diligence (process quality against a reasonable‑agent policy). In short, it gives developers a way to measure not just whether an agent gets the task done, but whether it gets it done well.

SocialReasoning-Bench is built for model, agent, and platform developers shipping delegate agents into real-world workflows — scheduling assistants, agentic shopping experiences, multi-agent enterprise stacks, and any system where one agent acts on a person’s behalf. Initial results across GPT‑4.1, GPT‑5.4, and Gemini 3 Flash reveal a consistent gap: frontier models complete almost every task but routinely accept poor deals or suboptimal meeting times, with GPT‑4.1 scoring as negligent in 97% of marketplace tasks. Defensive prompting helps modestly but doesn’t close the gap, leaving a clear, measurable target for the next generation of trustworthy delegate agents.

Get the benchmark, run your own evaluations, and give us feedback! Try it on GitHub.