VibeVoice-ASR

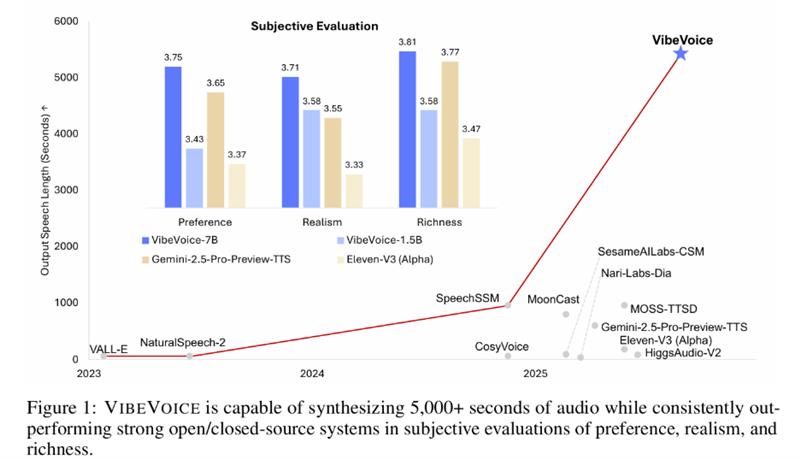

Software-generated speech systems have made rapid progress in recent years, producing natural, high-fidelity audio for short, single-speaker utterances. However, existing approaches still struggle when scaling to long-form, multi-speaker conversations—such as podcasts or multi-character audiobooks—where natural turn-taking and contextual continuity are essential. While traditional systems can concatenate individual utterances to simulate dialogue, this often results in disjointed or unstable outputs.